APIPark

Open-Source AI Gateway

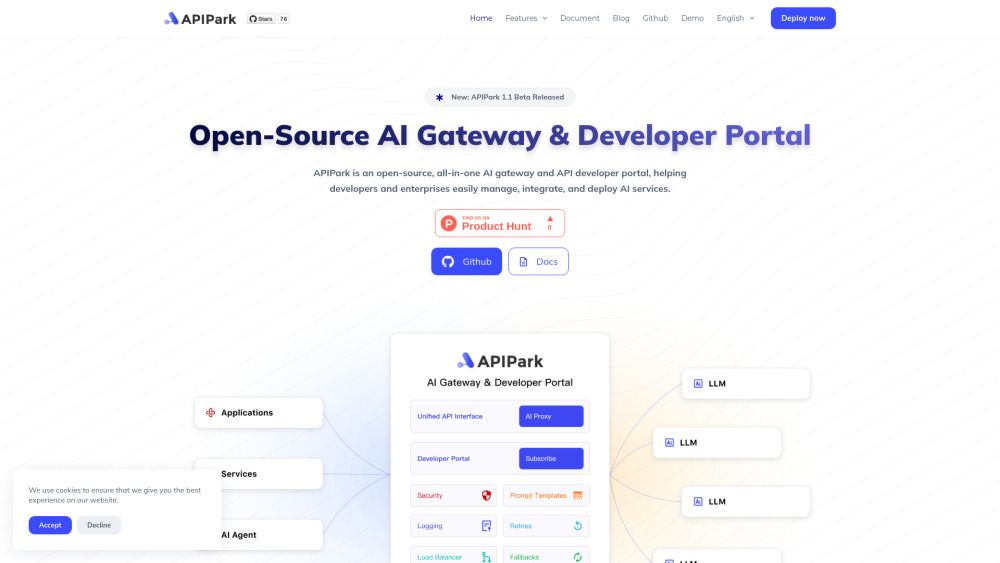

What is APIPark?

APIPark is a comprehensive LLM (Large Language Model) Gateway and API platform designed to streamline the management of LLM calls and integrate API services efficiently. It offers a range of features that provide fine-grained control over LLM usage, helping businesses reduce costs, improve operational efficiency, and prevent overuse of resources. With detailed usage analytics, users can monitor and optimize their LLM consumption effectively.

Key features of APIPark include:

Intelligent semantic caching strategies that enhance response speed and reduce LLM resource usage.

Flexible prompt management through customizable templates for easy modification.

Unified API signature for seamless integration of multiple AI models.

Efficient load balancing solutions to optimize request distribution across LLM instances.

Comprehensive API access management to ensure compliance with enterprise policies.

APIPark Features

APIPark is a comprehensive LLM (Large Language Model) Gateway and API platform that simplifies the management of LLM calls and integrates API services efficiently. It offers features such as intelligent semantic caching strategies that reduce latency from upstream LLM calls, improve response times for services like intelligent customer support, and decrease LLM resource usage. Additionally, APIPark provides flexible prompt management through customizable templates, allowing users to easily modify prompts without hardcoding.

Key capabilities of APIPark include:

Unified API signature for connecting multiple AI models simultaneously without code modifications.

Efficient load balancing solutions that optimize request distribution across LLM instances, enhancing system responsiveness and reliability.

Fine-grained traffic control for managing LLM quotas and prioritizing specific LLM calls to ensure optimal resource allocation.

Real-time dashboards for monitoring LLM interactions and usage analytics to help optimize consumption.

Built-in billing features for tracking API usage and driving monetization.

Why APIPark?

APIPark offers a range of benefits that enhance the management and deployment of Large Language Models (LLMs). By providing intelligent semantic caching strategies, APIPark significantly reduces latency from upstream LLM calls, leading to improved response times for services such as intelligent customer support. This not only boosts efficiency but also minimizes resource usage, making it a cost-effective solution for businesses.

Additionally, APIPark simplifies LLM call management and integrates API services efficiently, offering fine-grained control over LLM usage. This helps organizations reduce costs, improve operational efficiency, and prevent overuse. The platform also features robust security measures, including data masking to protect sensitive information, ensuring compliance with enterprise policies.

Real-time insights into LLM interactions through dashboards.

Flexible prompt management with easy-to-modify templates.

Built-in billing features for tracking API usage and monetization.

End-to-end API access management for secure sharing.

Efficient load balancing for enhanced system responsiveness.

How to Use APIPark

To get started with APIPark, you can utilize its comprehensive features designed to streamline the integration and management of large language models (LLMs). The platform offers a user-friendly interface that allows you to quickly combine AI models and prompts into new APIs, which can be shared with your collaborating developers for immediate use.

Here are some key features that will help you in your initial setup:

Flexible Prompt Management: Easily manage and modify your prompts using flexible templates.

Unified API Signature: Connect multiple AI models simultaneously without modifying existing code.

Load Balancing: Optimize request distribution across multiple LLM instances for enhanced responsiveness.

Traffic Control: Configure LLM traffic quotas and prioritize specific LLMs for optimal resource allocation.

Ready to see what APIPark can do for you?and experience the benefits firsthand.

Key Features

Multi-LLM Management

Cost Optimization

API Transformation

How to Use

Visit the Website

Navigate to the tool's official website

What's good

What's not good

Choose Your Plan

Plan 1

No feature details available

Plan 2

No feature details available

APIPark Website Traffic Analysis

Visit Over Time

Geography

Loading reviews...

Introduction

APIPark is a comprehensive LLM Gateway and API platform designed to streamline API management and enhance security. It offers robust features such as data masking to protect sensitive information and detailed usage analytics to optimize LLM consumption, ensuring cost efficiency and compliance. With its scalable architecture, APIPark enables businesses to securely share APIs and collaborate effectively with partners.

Added on

Oct 21 2024

Company

Eolink Inc.

Monthly Visitors

55,063+

Features

Multi-LLM Management, Cost Optimization, API Transformation